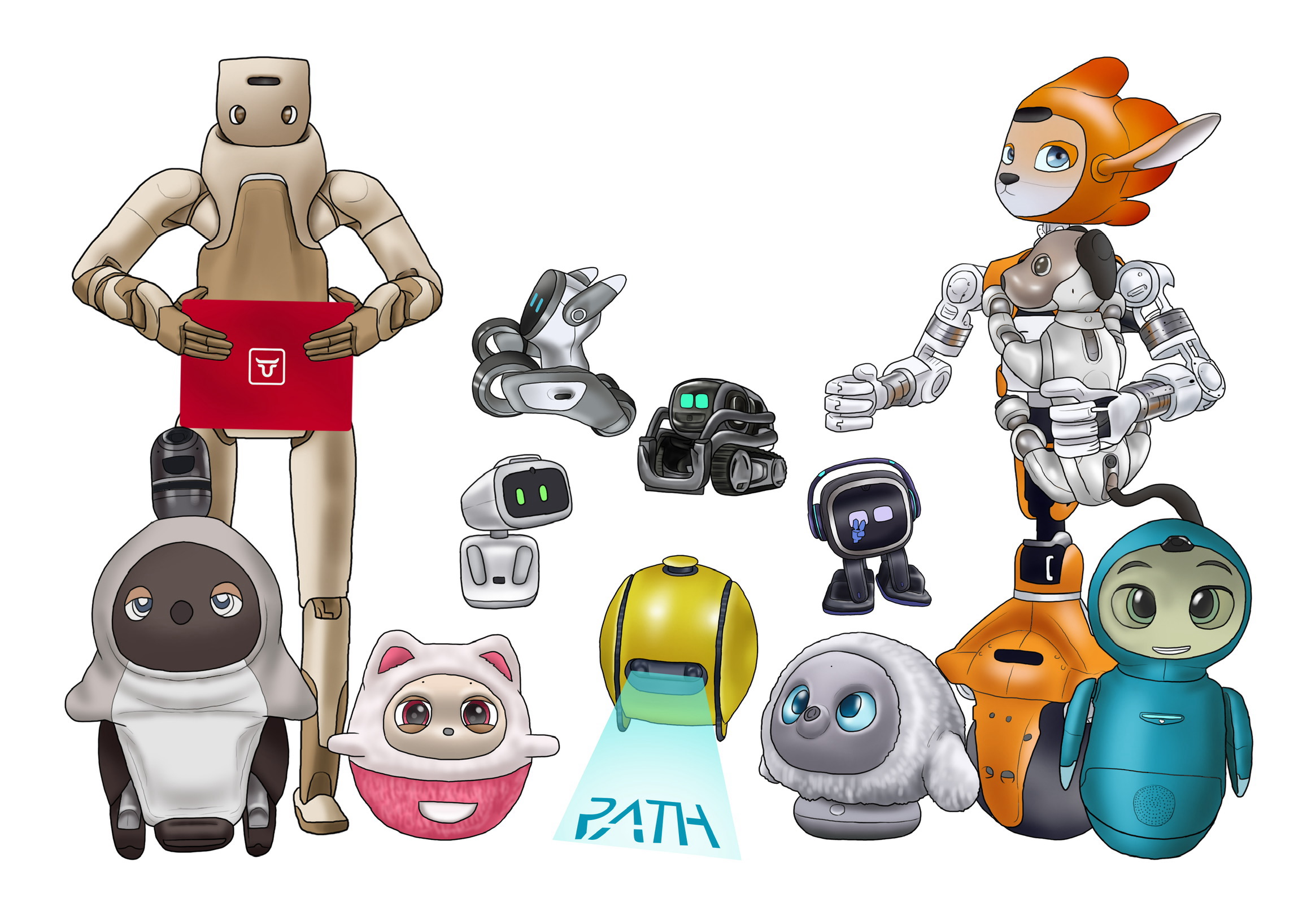

Why can anyone make a "soul" now?

Why is it so easy to make advanced pet robots? Behind it is a revolution that has occurred on both hardware and software.

First of all, the evolution of hardware.

The technology of "making a room map and moving while avoiding obstacles (SLAM)" has been fully modularized and has become surprisingly cheap with the spread of robot vacuum cleaners. Technology that was once only available to research institutions and major manufacturers can now be obtained as a part that anyone can use.

Next is the expression of "eye". In the past, a custom-made spherical display was required, but now, just by combining a high-definition LCD panel for smartphones and a game engine such as Unity, you can implement "moist pupils" at a low cost.

In addition, the "warmth" that LOVOT reached after trial and error can be easily reproduced "like that" thanks to the ingenuity of simple heaters and insulation materials.

However, the biggest change will be the appearance of the software side, especially the LLM (Large Language Model) and VLA (Vision-Language-Action) models. In the past, in order to make robots "behave like living things", it was necessary to write a huge number of conditional branches. " Humans defined rules such as being sad when beaten and "happy when stroked" one by one.

But now it's different. Just by entering the video captured by the camera into the AI model, actions such as "the person in front of you are laughing → that's why I approach and spoil you" are semi-automatically generated. Behavior has changed to something that is not programmed, but "interpreted".

It may not be as good as the "soul" contained in the original LOVOT itself. However, if it's a "soul like that", anyone can make it by combining APIs.

This phenomenon is also just a tip of the huge iceberg called "physical AI".